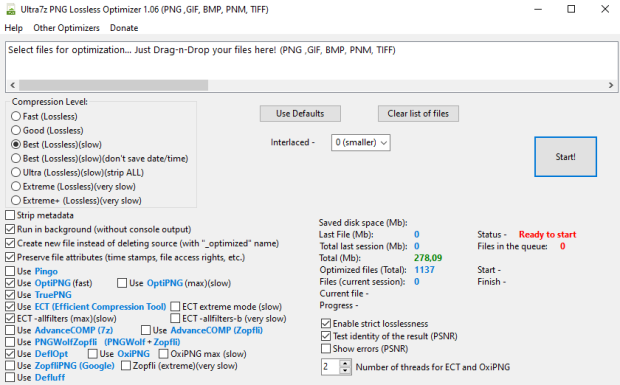

Optimize and convert your graphic files/images in smaller PNG files (up to 5-30%) lossless! Full compatible with source PNG format. Convert from PNG, GIF, BMP, PNM, TIFF to smallest PNG! It uses 6 programs and selects the best result: OptiPNG, TruePNG, ECT (Efficient Compression Tool), AdvanceCOMP, PNGWolfZopfli, DeflOpt. You can enable or disable each of them. Batch processing of files (drag-n-drop). Reduce the size of your PNG files in one click without quality loss! Program optimizes your file to new one with «_optimized» name ending (source file will remain intact). Program will be especially useful for webmasters to optimize images on sites to increase places in search results.

Features:

— Compression of graphic files/images without loss in quality (Lossless) up to 5-30%!

— Fast and ultra (slow) 5 modes.

— Full compatible with original PNG format.

— Supported formats: PNG, GIF, BMP, PNM, TIFF.

— Option «Strip metadata».

— Option «Preserve file attributes (time stamps, file access rights, etc.)».

— You can set «Run in background (without console output)» or uncheck it for manual process control.

How does it work?

The Portable Network Graphics (PNG) is a format for storing compressed raster graphics. The compression engine is based on the Deflate method. Unlike other lossless compression schemes, PNG compression does not depend solely on the statistics of the input, but it may vary within wide limits, depending on the compressor’s implementation. A good PNG encoder must be able to take informed decisions about the factors that affect the size of the output. The purpose of this article is to provide information about these factors, and to give advice on implementing efficient PNG encoders.

The PNG compression works in a pipeline manner.

In the first stage, the image pixels are passed through a lossless arithmetic transformation named delta filtering, or simply filtering, and sent further as a (filtered) byte sequence. Filtering does not compress or otherwise reduce the size of the data, but it makes the data more compressible.

In the second stage, the filtered byte sequence is passed through the Ziv-Lempel algorithm (LZ77), producing LZ77 codes that are further compressed by the Huffman algorithm in the third and final stage. The combination of the last two stages is referred to as the Deflate compression, a widely-used, patent-free algorithm for universal, lossless data compression. The maximum size of the LZ77 sliding window in Deflate is 32768 bytes, and the LZ77 matches can be between 3 and 258 bytes long.

The good encoders are at least applying the filtering heuristics.

Use programs:

ECT (Efficient Compression Tool) — https://github.com/fhanau/Efficient-Compression-Tool

PNGWolfZopfli — https://github.com/jibsen/pngwolf-zopfli

OptiPNG — http://optipng.sourceforge.net

TruePNG — http://x128.ho.ua/pngutils.html

AdvanceCOMP — http://www.advancemame.it

DeflOpt — http://www.walbeehm.com/download

Zopfli Compression Algorithm is a compression library programmed in C to perform very good, but slow, deflate or zlib compression. Zopfli Compression Algorithm was created by Lode Vandevenne and Jyrki Alakuijala, based on an algorithm by Jyrki Alakuijala.

There are a number of factors that affect the size of PNG image files, such as the number of colors in the image and whether the image data is stored as RGBA data or in the form of references to a color palette. The main factor is the quality of the Deflate compression used to compress the image data, which is in turn affected by the quality of the compressor and how well the data to be compressed is arranged.

The PNG format supports a number of scanline filters that transform the image data by relating nearby pixels mathematically. Choosing the right filters for each scanline can make the image data more compressible. It is, however, infeasible for non-trivial images to find the best filters so typical encoders rely on a couple of heuristics to find good filters.

PNGWolf employs a genetic algorithm to find better filter combinations than traditional heuristics. It derives a couple of filter combinations heuristically, adds a couple of random combinations, and then looks how well each combination compresses. Two very different combinations may compress similarily well, for instance, one combination may be very good for the first couple of scanlines, while the other may be very good for the last couple of scanlines. So taking the beginning of one combination and the tail of the other to make a new one may result in a combination that compresses better then the original two.

Run only 1 active instance of the program!

Current file has its own status in the list: «running», «done» and «saved space».

Full stats:

1. Number of optimized files (total and current session).

2. Files in the queue (quantity).

3. Saved disk space (Mb) total and for each file in the list.

(You need to save files «ressize.txt» and «resnumbers.txt» before updating, if you want to save the overall statistics («Total»)).

Size (7z): 25 Mb